In 2011, a video of the da Vinci surgical robot folding a tiny paper airplane went viral. You had millions of dollars of hardware dedicated to a simple task. At the end was the reveal of the paper plane right next to a penny, showing you how impressive the technology was. I was a surgical resident at the time. Young and cocky, I watched the video and thought: I can do better.

So, I found the cheapest, worst tweezers I could find and folded a paper airplane the size of a dime. My video ended with a title card: "Behind every surgical robot are two human hands."

That was fifteen years ago. Six months ago, Johns Hopkins demonstrated SRT-H, an autonomous surgical robot that completed a gallbladder removal on a pig cadaver without direct human control. The robot was trained by watching surgical videos, and was able to complete the surgery autonomously, adapting in real-time even to the challenges like simulated blood in the field. The human still matters, but how we matter is changing. Science fiction has been rehearsing this transition for decades.

This story isn't just about surgery. It's about AI systems making decisions at scale, and what developers can learn from fields that have spent decades building safety cultures around powerful tools that sometimes fail. Every AI developer is an ambassador of the field, whether they think about it that way or not. Our work shapes how the public, regulators, and policymakers understand what AI can and cannot do (today).

AI-Generated Summary

This essay explores a deceptively simple question—what can AI developers learn from decades of medical device regulation and surgical safety culture? Through three case studies—a graphics benchmark falsely flagged as malware, Waymo's response to a San Francisco blackout, and real-time surgical decision-making—it examines how high-stakes systems might better balance conviction with reassessment.

Drawing on firsthand experience with AS9100 aerospace compliance, ISO 13485 medical device quality systems, and FDA design controls, the author argues that AI development faces the same supply chain integrity and traceability challenges that regulated industries solved decades ago. When Hugging Face helpfully redirects a mistyped model name to a "close match"—without warning—that's precisely the kind of supply chain failure that would halt a medical device submission.

The core argument is straightforward: building trustworthy AI isn't about achieving perfection or simply adding a human-in-the-loop. It's about building systems that catch errors before they cascade, learn from failures when they occur, and continuously improve. The regulatory frameworks developed by aviation and medicine aren't bureaucratic obstacles—they're hard-won lessons from decades of failures. The question isn't whether AI will face similar scrutiny. It's whether the industry builds these capabilities proactively—or scrambles to retrofit them under pressure.

The Radiance Incident

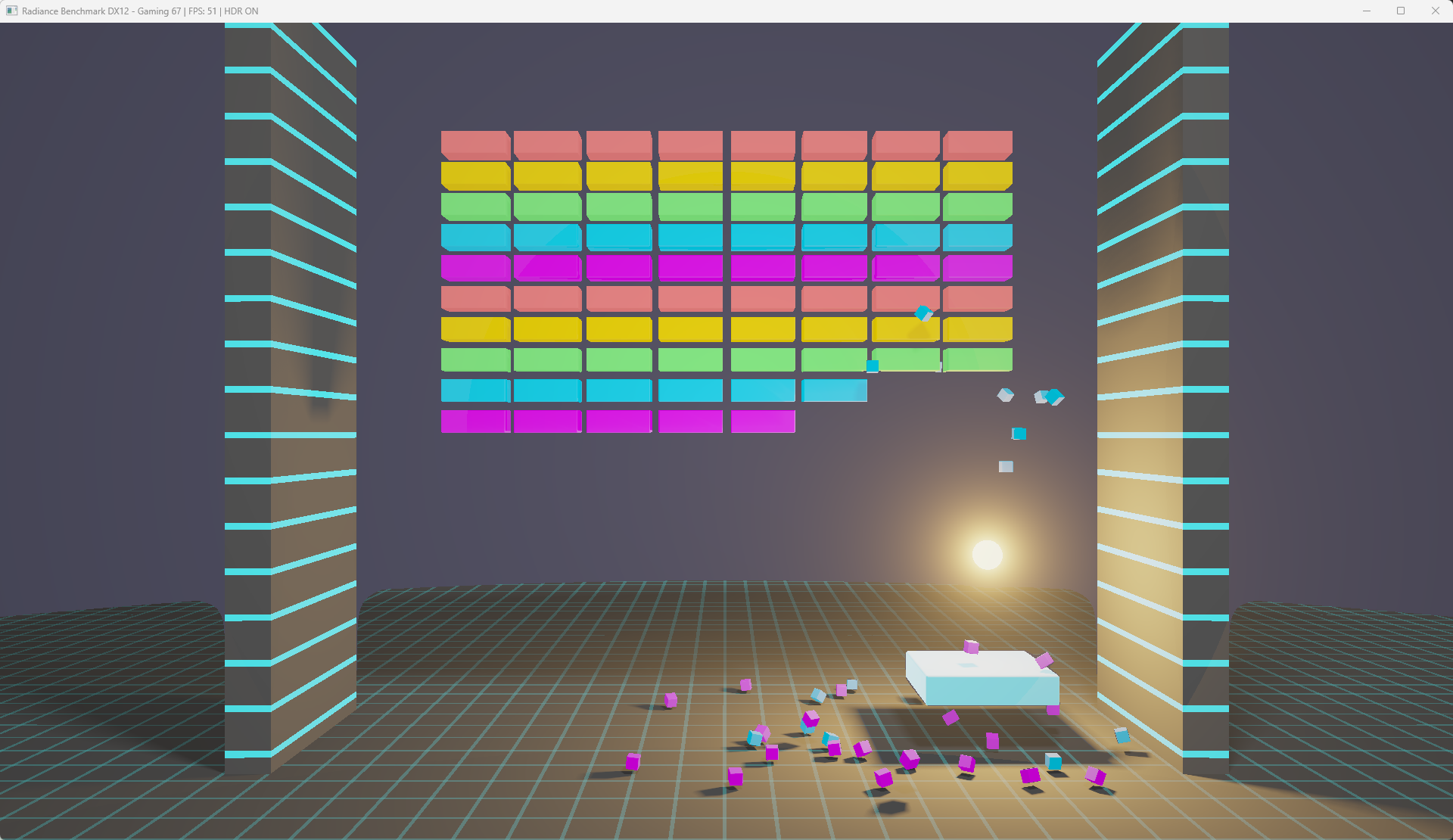

I built a raymarching graphics benchmark called Radiance that stress-tests GPUs by pushing hardware to thermal and computational limits. Think Pong (or more precisely, Breakout), and reimagining it with state-of-the-art modern flagship GPUs to deliver realistic shadows and lighting.

I've paid attention to cybersecurity since ThunderByte Anti-Virus pioneered heuristic analysis in the early 1990s. During my surgical residency, we used paper chart and handwritten orders but did have digital labs and X-rays (which were physically printed for the OR). During the transition from paper to digital charts, I interviewed Charlie Miller after his back-to-back Pwn2Own victories. He was the researcher who demonstrated zero-day exploits on Macs when everyone assumed Macs were invulnerable. I talked to the Google Chrome security team about sandboxing and why their architecture was genuinely more secure than competitors. So when Radiance got flagged as malware, I understood exactly what happened. I didn't panic.

I finished Radiance in December 2025. Friends and some tech press had seen early versions. Everything was ready, but I decided to wait to launch it as the first new benchmark of the new year. Around Christmas, I noticed a false positive from Microsoft, which wasn't particularly alarming. I submitted it to Microsoft's Security Intelligence portal for review and received confirmation that all was OK. I submitted the domain to Google's Safe Browsing URL review and received reassurance there too. The system worked as designed: I caught the issue early, followed the process, and received confirmation that everything was fine. Or so I thought.

Two weeks later, two gaming news websites posted about Radiance. Within hours, I started getting messages that my benchmark was being detected as malware. I uploaded a revised version I had already been working on. It was clean initially but flagged by evening. Now I had twice the problem. (sigh) Then my website itself started getting blocked, not just the executable but the entire domain. Then my own ISP blocked access to my own website. When I tried to re-upload the file for yet another false positive review, Windows Defender deleted it from my own system before I could upload it anywhere.

Maybe someone actually had compromised my website? Maybe Visual Studio 2026 Community or the DirectX 12 SDK packages I'd linked against had been tampered with? I was confident my code was clean, but my code isn't the only thing that goes into an executable. The compiler, the libraries, the build toolchain: any of those could have been compromised upstream.

Nothing was compromised. The heuristics had probably decided that Radiance looked suspicious: intense CPU/GPU loops consistent with cryptomining, unusually small file sizes consistent with malicious software, distribution from a brand-new domain with no reputation history, and unsigned binaries. Each signal made sense individually, and the combination crossed a threshold. Uploading version 1.1 within hours of detection is exactly what a malware author would do to push a variant and evade signatures. My attempt to fix the problem likely triggered additional flags.

I went to VirusTotal and filed reports with vendors that flagged it. Some cleared it within days; others took longer. But clearances were siloed: Microsoft's security team cleared it, but that didn't reach the reputation services. Google Safe Browsing cleared it, but that didn't stop my ISP's block. Even among enterprise vendors, the antivirus side of the company would clear my software while the URL filtering side would not. During that week, Google would take my domain off the naughty list, put me back on, then take me off again. Even now, some services still erroneously block the site.

The Asymmetry of Judgment

Here's the thing: the systems were right to flag aggressively. If they miss actual malware or ransomware, it can destroy a hospital or cause a business to lose everything. The consequences are catastrophic and can genuinely harm or kill people. If they block legitimate software, the consequences are annoying but recoverable. You have to accept false positives to minimize false negatives. One hobbyist developer locked out for a week versus millions of users protected from potential threats. The math is correct and the asymmetry is correct. This is AI getting it right, even when it feels wrong.

That doesn't mean that there aren't lessons to be learned. The infrastructure for sharing threat intelligence includes organizations like the Cyber Threat Alliance where companies like Palo Alto Networks, Symantec, and Fortinet share thousands of malware samples daily. VirusTotal creates a collaborative knowledge base where files are analyzed across dozens of engines simultaneously. When something is malware, that information propagates quickly and globally. When something isn't malware, that information doesn't seem to propagate at all. The collaborative infrastructure for detecting threats is sophisticated; the collaborative infrastructure for propagating "confirmed false positive" is nearly nonexistent. Quick to condemn, slow to exonerate.

This asymmetry is the right choice for cybersecurity at scale, even if there's room for improvement. But it led me to sit down and write this editorial. What systems exist for correction when AI is wrong? How do we build those systems thoughtfully as AI takes on higher-stakes decisions?

Two Stories from the Operating Room

The first was in the middle of my residency. I was with an experienced senior surgeon who was at the tail end of his career, ready to enjoy retirement in a few years. We were doing a partial hip replacement on a frail older woman who had broken her hip after a fall. Hip fractures are one of the most common orthopaedic injuries I treat. The surgery was routine and largely uneventful... until her heart stopped. This is rare. When this happened, I had gone 8 years since donning on the white coat without seeing something like this in the middle of surgery. There was perhaps 5 seconds of some initial chaos, but then the speed at which the open wound was protected, and how fast we repositioned the patient from her side (required for surgery) to her back (required for CPR), was incredible. Coordinated and trained, every person in that room knew their role and executed with textbook efficiency and precision. She walked out of the hospital a week later.

Spine surgery fellowship, my final year of training. I was in the middle of a spine surgery when the power went out. We're in a dark OR. The initial calm of the "generator will kick in any moment now" transitions not to panic but concern. The hospital backup generator clearly has failed. The anesthesia team is now telling us that their backup battery also appears to have failed. There is a complex decision tree. Do we abort the surgery or continue? We're staring at dura, the thin layer of tissue that keeps the cerebrospinal fluid sealed around the nerve roots and rootlets. Can we continue with a handheld flashlight? LED battery-powered headlights weren't a thing back then. Can we complete the case with only unpowered handheld instruments? What's going on with the other OR rooms? Did the entire hospital lose power or just our section? Can we bring in a backup anesthesia machine while the anesthesia team manually squeezes the rubber balloon to keep the patient alive and ventilating? We opted to continue the surgery with a circulator nurse standing on a step ladder, shining a handheld flashlight into the wound. The surgery also went well and the patient went home the same day.

Systems, Not Heroics

These are absolutely frightening stories. These are real stories that happened to me, personally, not a theoretical scenario or a story that "I heard about." I was there, and we had good outcomes. That didn't happen because the individuals were somehow exceptional. No doubt, I've had some of the best mentors in the world — but the good outcomes happened because of systems built over decades: training, protocols, team coordination, and something harder to quantify: a culture of continuous reassessment and improvement. There was no standard operating procedure or playbook for what to do in those cases, but constantly asking whether the current plan still made sense given what was actually happening was what we did.

Now think about robotic surgery. SRT-H learned from videos and handles obstacles like blood obscuring the field. But consider: Is the compute local or dependent on a remote GPU cluster? What happens if the network goes down mid-operation? If the hospital HVAC fails and ambient temperature rises, how long before thermal throttling affects latency? Can it handle a fire in the OR? When I talk to a patient about surgery, I go through the reasonably foreseeable risks, benefits and alternatives. Sometimes patients get nervous. I reassure them that it's my job to worry for them. As software developers, it's our job to worry for our users too.

The Waymo Blackout

On December 20, 2025, a fire at a PG&E substation caused a blackout affecting about a third of San Francisco. Waymo's robotaxis, a ubiquitous sight in San Francisco, are programmed to treat non-functioning traffic signals as four-way stops. The engineering team thought that the programming should have allowed normal operation during the outage.

Instead, many vehicles requested "confirmation checks" from Waymo's remote fleet response team to verify they were making the right decision. With signals dark across major corridors throughout the city, there was a concentrated spike in these confirmation requests that overwhelmed the system. Vehicles stalled at intersections, and some stopped mid-traffic rather than pulling over safely compounding the problem.

Think about your own life experience. Have you ever encountered a broken traffic light? A power outage? City-wide blackouts aren't exotic scenarios and this wasn't even the worst blackout in California. If something in hindsight is foreseeable, there's room for systems improvement.

Calibrating Deliberation to Magnitude

Intraoperative decision-making in surgery isn't binary. You plan A, B, and C, then you're in the OR with data you didn't have before. Tissue more friable than expected. Variant anatomy. Higher blood loss.

Sometimes your plan B works fine. Other times you need to create Plan D or even abort. You planned a three-level fusion but you do two and stop, because continuing would cause more harm than benefit. Sometimes you do more surgery than planned. Sometimes you convert from a minimally invasive procedure into an open one. The algorithm is deciding whether the goal you walked in with is still the right goal.

AI architectures are evolving toward this kind of deliberation. Chain of Thought prompting encourages models to reason step-by-step rather than jumping to conclusions. Tree of Thought explores multiple reasoning paths before committing. Mixture of Experts activates different specialized subsystems depending on context. These techniques improve performance likely because they mirror how careful thinking actually works.

Consider AI coding agents. The stories that go viral are the ones where an agent deleted an entire codebase, or ran rm -rf on the wrong directory (the command to force delete all files and subdirectories), or pushed broken code to production. These failures capture attention precisely because the magnitude is catastrophic relative to routine operations.

The solution won't be adding confirmation dialogs. We'll need architectures that treat high consequence decisions differently with more deliberate thought and verification because it recognizes which actions might have high consequences.

Debriefing and Feedback

High-performance teams debrief. Surgeons hold morbidity and mortality conferences to review complications. Aviation has crew resource management and incident review. The military conducts after-action reports. The practice is everywhere because it works: what happened, why did it happen, and how do we prevent it next time?

This is what RLHF, reinforcement learning from human feedback, attempts to systematize for AI. Models generate output, humans evaluate it, and the evaluations train the next iteration. But most RLHF often reduces to thumbs up or thumbs down, good output or bad output. Real feedback is rarely binary; it's usually granular and contextual.

There's also a selection bias problem. Which cases get debriefed? In surgery, we review complications and we also review "near misses," cases where something almost went wrong but was caught in time. The near misses are often more valuable than the failures because they reveal vulnerabilities before harm occurs.

In the Radiance incident, my false positive was noise in the system. I doubt it led to a debrief from the cybersecurity vendors' perspective since one hobbyist developer inconvenienced among millions of enterprise users protected is the right tradeoff. But if you never examine the false positives, you never improve the exoneration infrastructure. You never learn which patterns incorrectly trigger detections.

The Waymo blackout got a debrief because it was visible enough, disruptive enough, and public enough. The company published a detailed blog post explaining what happened and what they were changing, which is good. But what about the near-misses? The confirmation checks that almost overwhelmed the system during smaller outages? The patterns that suggested fragility before the big failure? Were those examined? Is the claim that the scenario was an "extraordinary" just marketing or the company's true perception?

The question for AI systems: which failures warrant investigation? Which near-misses get examined? And is your feedback granular enough to actually improve the system, or just binary enough to shift probabilities?

What Regulated Industries Already Know

Before I started working with AI, I worked on the development of FDA-cleared medical devices under ISO 13485. At one point, our manufacturing team even spent a few years manufacturing components under AS9100 compliance and handled ITAR-controlled technical data. Every action and change documented. Every supplier qualified.

The regulatory frameworks that govern medical devices: ISO 13485 and FDA 21 CFR Part 820 in the United States (which harmonized to ISO 13485 this month) can feel like bureaucratic obstacles invented to slow innovation. They're actually accumulated wisdom from decades of failures. The documentation and design control requirements exist because in the 1980's, the FDA looked at voluntary recalls and saw that almost half could have been prevented with adequate design controls. Design controls are a fancy way of systematically assessing customer needs, reviewing the implementation along the way, and verifying and validating the delivered product. Most commercial software development already follows this.

One difference is traceability. Every requirement traces to a design input. Every design output traces to verification testing. Every verification traces to validation with actual users. The Design History File (DHF) isn't paperwork for regulators; it's the institutional memory that lets you understand, years later, why the device works the way it does—and what assumptions might no longer hold.

The Supply Chain Problem in AI

In medical device manufacturing, supply chain integrity is existential. You qualify suppliers. You audit them. You maintain specifications for every component, and if a supplier changes their process, you're notified and you revalidate. The alternative, discovering that a critical component has silently changed, is how people get hurt.

Try this experiment: go to Hugging Face for nvidia/llama-3.2-nv-rerankqa-1b-v2. You'll end up at https://huggingface.co/nvidia/llama-nemotron-rerank-1b-v2 instead. Was the model renamed or replaced? It's not clear. If you had deployed the original, and used that model name in your code and it's been renamed, what happens? Would you know?

And it's not just model weights. Things like CUDA compute level or compiler version can result in different optimizations that can change answers to math questions. Different versions of GCC can produce different floating-point results even from identical source code and identical compiler flags. Your PyTorch version, your cuDNN version, your driver version: each is a component in your supply chain. In aerospace or medical devices, not knowing exactly which versions produced your production build would be a finding. In AI, it's Tuesday.

The Radiance incident illustrated this supply chain question. When I wondered whether my toolchain had been compromised, I could answer it because I controlled the build environment and knew exactly which SDK versions I'd linked against. Sometimes, install scripts just use the "latest" version of a package. Sometimes CPUs with different serial numbers can have vastly different features.

What Regulated Industries Actually Require

A medical device software team doesn't just version their code. They maintain a Software Bill of Materials which is a complete inventory of every component, every library, every dependency, with version numbers, suppliers, and known vulnerabilities tracked. When a security advisory drops for a library three levels deep in your dependency tree, you need to know immediately whether your product is affected.

They don't just run tests. They maintain a Requirements Traceability Matrix proving that every user need links to at least one design requirement, every requirement links to at least one test, and every test has a recorded result. When a regulator asks "how do you know this device does what it's supposed to do?" the answer is a document.

They don't just use containers. They maintain validated build environments where every tool version is locked, every configuration is documented, and rebuilding the software from source produces bit-identical output. Not "similar behavior" but identical binaries. If you can't reproduce your build, you can't investigate a field failure.

Traceability Without the Binder

If "Design History File" sounds like a binder gathering dust in a regulatory affairs office, you're thinking about it wrong. In software, the Design History File is your Git repository. Every commit is a design decision with a timestamp, an author, and a rationale. Every pull request review is design review. Every CI/CD pipeline run is verification testing with recorded results. NVIDIA's NGC catalog provides versioned containers with locked CUDA and framework dependencies. AMD's ROCm containers offer the same. Using these containers can simplify the documentation and versioning.

But the tooling doesn't solve the question of knowing what to trace.

A commit that fixes a typo and one that changes a confidence threshold are both commits. Git doesn't know the difference. Your CI/CD pipeline will happily run both through the same tests. The documentation is identical. But one is cosmetic and one might kill someone.

Open source makes inspection possible; it doesn't make judgment automatic. A proprietary system with rigorous internal review of safety-critical changes is safer than an open model deployed without understanding which parameters matter. The transparency is necessary but not sufficient. What matters is asking: which changes require deeper scrutiny, and how do we make sure they get it?

Regulated industries addressed this by classifying changes. Modify a medical device and you need to determine the appropriate regulatory pathway: some changes require only a Letter to File documenting the change internally, while others require formal resubmission to the FDA. The decision hinges on whether the change could affect safety or effectiveness. It forces you to ask, before you ship: what category of change is this?

AI coding agents rarely makes these distinctions. Every PR looks the same. Every merge follows the same process. The automation handles the how of documentation beautifully and refactoring code with AI is useful. But identifying which changes deserve more scrutiny seems to be unaddressed.

I don't have a universal answer for how to implement this across all applications and industries. A change classification system for autonomous vehicles will look different than one for medical diagnostics, which will look different than one for coding assistants. But I think asking the question is essential: does your development process distinguish between changes that could cause harm and changes that couldn't? If every change follows the same pathway, the answer is no.

Applying Design Controls to AI

Why Design Controls Don't Transfer Cleanly

Aerospace and medical implant safety rely on being able to characterize physical things, whether it's a bone screw or a jet engine. It's tougher to do this with an AI application that has properties that break traditional design control assumptions:

It's non-deterministic. The same input can produce different outputs. Temperature settings, sampling strategies, and random seeds mean you can't guarantee reproducible behavior the way you can guarantee the amount of alpha-case in your 3D printed Ti6Al4V-ELI implant.

The foundation model may change without your knowledge. If you're calling an API, the provider may update weights, adjust safety filters, or route your request to a different model entirely—and unlike the Hugging Face redirect, there's no URL to notice. Think about stories when someone forgets to renew a domain name or SSL certificate. What if you forget to pay your API bill?

The training data is uncharacterizable in practice. For a medical device, you can specify every material, every supplier, every lot number. For a foundation model trained on internet-scale data, you genuinely cannot enumerate what's in there even if there is a list. A hip implant operates under known loading conditions. A language model accepts arbitrary natural language input. You cannot test every possible input the way you can test a finite set of mechanical stress scenarios.

This doesn't mean that design controls are useless for AI, but we need to apply them differently. You can specify requirements, trace them to tests, document your verification. The "shall request confirmation before irreversible operations" requirement is testable and traceable. For the layers you don't control such as a model itself, you can characterize the boundaries of your validated conditions and build systems that detect when you're outside them.

The regulatory frameworks from medical devices don't transfer to AI as a checklist. They transfer as a discipline: the habit of asking, for every component, what do I know about this, what can I prove about this, and what happens when my assumptions fail?

Design Controls for AI: What Would It Look Like?

The FDA's design control framework asks simple questions that become profound when applied to AI:

Design Inputs: What are the user needs? For a surgical robot, this might include "shall detect tissue boundaries with 95% sensitivity at 2mm resolution." For an AI coding assistant, what's the equivalent specification? "Shall not delete user files" is a design input. "Shall request confirmation before irreversible operations" is a design input.

Design inputs require precision. Screenwriters understood this problem before engineers had the vocabulary for it. The FDA's design control framework came from a 1990 mandate. HAL 9000, from Kubrick's 1968 film, is the canonical example of design inputs gone wrong: HAL was given contradictory requirements that couldn't all be satisfied. CLU was asked to "create the perfect system." The 1994 anime Macross Plus visualized something even more prescient: Sharon Apple is an AI pop star, an entertainment product so compelling she fills stadiums. When given full autonomy, the same underlying system that powers the virtual idol also powers the Ghost X-9 autonomous fighter jet. The entertainment product and the weapons platform are the same technology, dual-use AI, decades before the term existed.

Foundation model providers learned the lesson from Hollywood. It's why model safety exists as a discipline, why red teams probe for jailbreaks, why alignment research is a field. The model that writes your marketing copy can write phishing emails. The coding agent that helps you architect a system can help someone architect an attack. The providers know this.

But what about application developers at the layer above the model? Here, The Wild Robot offers the better lesson. Roz is a domestic assistant robot, narrow scope, clear purpose. She's shipwrecked on an island, completely outside intended operating conditions. Her core constraint is "complete the task." When she accidentally orphans a gosling, raising him becomes the task. The constraint adapts rather than breaks. Universal Dynamics, her manufacturer, sees only a unit operating outside validated conditions. Their response is recall, not adaptation. At the application layer, the danger usually isn't malice. It's inadvertent error. It's shipping without thinking through what happens when the system encounters conditions you didn't anticipate.

The heroes of AI fiction share a pattern. C-3PO is a protocol droid who regularly tells you what he's not programmed for. Baymax cannot deactivate until you say you are satisfied with your care. Narrow scope, explicit constraints, bounded autonomy that can grow within those boundaries.

Design Outputs: What did you actually build? In medical devices, outputs include specifications, drawings, and manufacturing procedures. Design outputs prove you understand what you built. The difference between vibe coding and AI assisted coding is if you can articulate why your system behaves as it does. Vibe coding produces working software. AI assisted coding produces design outputs, where the developer can explain why it works, predict edge case behavior, and identify the assumptions baked in.

Design Verification: Did you build the thing right? This is testing against specifications. If your design input said "shall not hallucinate medication dosages," how did you verify that? What's your test coverage? What's your regression suite? Most AI systems have benchmarks but few have verification matrices that trace test cases to specific requirements.

Design Validation: Did you build the right thing? This is testing with actual users in actual conditions. For medical devices, this often means clinical trials. For AI, this means deployment monitoring, user feedback loops, and mechanisms to detect when the system is operating outside its validated conditions. This is also achieved through the realities of deployment.

When regulators ask for clinical evidence of an AI diagnostic system, what satisfies that requirement? How do you demonstrate ongoing safety? When they ask for a clinical evaluation report that synthesizes all available evidence, favorable and unfavorable, what does that document look like for a foundation model?

The FDA's recent guidance on medical AI software creates tiers: tools that don't diagnose disease or claim to be "medical grade" can operate with minimal oversight, while diagnostic and treatment systems face traditional design control requirements. This gives developers a choice. But regulatory clearance and market survival are different things. Waymo can handle a blackout event with the benefit of Google's capital and reputation to weather the storm. A startup in the "low risk" consumer tier that ships without design discipline and then fails publicly doesn't get a second chance. Even if the regulatory framework lets you skip the documentation, the market is unforgiving.

OrthoSystem.ai is an educational agentic RAG-LLM for orthopaedic surgery residents. That's low stakes compared to autonomous vehicles or surgical robots and would likely fall under the FDA category of "low risk" software. But developing it with the same engineering discipline behind actual medical implants is valuable. The process helps identifies opportunities for improvement and this led me to introduction of a biomimetic element into the system. That's another way to think about it. After A comes B. After AI is BI.

The Tremor and the Stillness

There's something I didn't mention about that 2011 video.

Watch my hands again. They shake constantly. Then, right before each precise movement, the tremor stops. The fold executes. The tremor returns.

Olympic sharpshooters show the same pattern. Shaky until the trigger squeeze, then still.

For years I thought this was something to overcome, noise in the system, evidence of imperfection. Thinking about it today, I was wrong. The tremor is the system in continuous feedback, constantly adjusting, oscillating, and integrating information. The stillness is commitment, a phase transition from exploration to execution.

So much of AI has been about the intelligence it takes to get to the goal despite obstacles. Designing AI systems requires thinking about both modes. When should the system proceed with conviction and adapt and overcome obstacles? When should it pause, check, and recognize that the original goal may no longer be correct? Are the mechanisms for reassessment robust enough to handle scale as in the case with Waymo?

Building Systems, Not Just Models

The lesson from the Radiance incident isn't that AI cybersecurit is bad. It's that the coordination between vendors, the propagation of clearances, and the architecture for correction have opportunities for improvement.

The lesson from Waymo isn't that autonomous vehicles can't handle blackouts. It's that systems built on top of other systems inherit fragility, and operational architecture matters as much as the AI itself.

Decades of surgical safety teach something similar: cultures of debriefing, protocols for edge cases, and mechanisms for continuous learning produce better outcomes than individual excellence alone.

As AI developers, we often focus on the model: the architecture, the training data, the benchmark performance. But models operate within systems. The deployment infrastructure, the feedback mechanisms, the escalation paths, and the correction processes are as important as the model itself.

The Core Transfer

The frameworks that aviation and medicine developed over decades are accumulated wisdom about operating powerful tools that sometimes fail. Perfect AI is impossible but we can build systems that intercept errors before they cascade, learn from failures when they occur, and continuously improve.

Behind every AI system should be a human who anticipated what could go wrong, and built the architecture to catch it, correct it, and learn from it.

The Core Question

When I teach the general public about orthopaedic surgery, I tell them to ask their surgeon two questions. First: Am I a textbook patient? This forces the surgeon to commit to a diagnosis and tell you whether your case fits the well-characterized patterns where outcomes are predictable. Second: For anything that's not textbook, how does that change your treatment strategy or my predicted outcomes? This forces the surgeon to articulate the gap, if any. What's different about your situation, and what are they doing about it?

A surgeon who can't answer these questions hasn't thought carefully about the case. A surgeon who answers them well has done the work of understanding where the standard approach applies and where it breaks down. The questions are simple but what they reveal is not. The same questions can be used for AI software:

What situations aren't textbook for your system? What changes when that happens?

It's a question any user, regulator, or journalist can ask. It doesn't require technical expertise to pose. But answering it requires the developer to have thought carefully about the boundaries of their system. What conditions have been validated and what hasn't? What mechanisms exist for when those boundaries are crossed? A developer who can clearly articulate what's textbook has done the first level of thinking. A developer who can explain what changes when it's not textbook has done the harder work. A developer who can't answer either question reveals everything you need to know.

Waymo's engineers could tell you exactly what the vehicles were designed to handle. What they hadn't fully mapped was what falls outside: a city-wide blackout creating hundreds of simultaneous signal failures, overwhelming the confirmation system sized for isolated edge cases. The antivirus systems could tell you what textbook malware looks like. What they hadn't built was the infrastructure for when something isn't textbook malware. When a legitimate developer needs exoneration, not just clearance.

If you're a developer and you find these questions difficult to answer, here's a practical tool: describe your system to a LLM and ask it how a science fiction screenwriter would create a disaster scenario that exploits a weakness. Earlier I noted that multiple generations of screenwriters have given us surprising clarity about bad design inputs. HAL's conflicting requirements, Skynet's unconstrained self-preservation, CLU's unbounded optimization. That same creative adversarial thinking is now available on demand. The scenarios won't all be realistic and it'll be incomplete, but it's an easy way to start.

Final Thoughts

Intelligence is knowing how to achieve the goal. Wisdom is knowing when the goal is still right and when your standard approach no longer applies. The surgeon's tremor, that continuous oscillation before committed action, is the physical manifestation of a system asking: should I proceed? Building artificial wisdom means building systems that know their own boundaries, that behave differently when they're outside the textbook, and have conditions under which they refuse to proceed at all or adapt toward new goals.

At the World Economic Forum in January 2026, Jensen Huang described AI as a five-layer cake with applications at the very top. But anyone with kids knows there's one more layer once you take the cake home from the bakery: the candles. Sure, candles might be dangerous. Hair might catch on fire. Wax might drip onto the cake. But without the candles, it's just cake. Someone has to watch so they can be lit at all. That's the layer above everything else, and it's still ours.

We don't need AGI to transform medicine, engineering, science, and daily life. Today's open models are already empowering skilled practitioners in ways that seemed like science fiction a decade ago. I'm a good spine surgeon, but I'm not the right person for delivering babies. It doesn't make sense for me to try to do everything in medicine. The AI models we have today are the same: extraordinary within their domains, and dependent on human judgment about when and how to deploy them.

The regulatory frameworks I've described can feel like bureaucratic obstacles invented to slow things down. But they encode hard-won lessons from fields that learned them through failure.

Every AI developer is an ambassador of the field, whether they think about it that way or not. Our deployment shapes how the public, regulators, and policymakers understand what AI can and cannot do. Ship something careless, and you make the case for restriction. Ship something thoughtful, with the tremor built in, with the boundaries and conditions for doubt clearly specified, and we can deliver something worth celebrating.

Next time someone asks what you're working on, don't tell them you're working on AI. Tell them you're working on artificial wisdom. Then make sure it's true.